What is a Universal Data Language?

A Universal Data Language (UDL) is a way of describing events and entities in behavioral event data, as well as the relationship between them. It's similar to the vocabulary and grammar of a language in that common rules are needed to create shared meaning.

Interpreting the tangled web of language doesn’t just happen by chance – we rely on a pool of shared rules associated with each word and phrase.

The problem is, languages aren’t easy to create – just count the number of Esperanto speakers in the world today.

A Universal Data Language builds definitions into the core of your data strategy, hardcoding meaning into each row of events. It is the glue that holds your data platform together by making sure each metric is accurate and explainable.

Ad hoc data definitions - how to confuse everyone

Relying on one conscientious Product Manager or Data Engineer to catalogue the maze of metadata necessary to effectively manage a data platform is like leaving a pile of receipts on the table and hoping someone will do your accounts. Yet this is exactly what so many companies do. It is wishful thinking to assume that your data dictionary spreadsheet will accurately define your events and entities.

Even if the definitions are accurately recorded and updated, how can there be any certainty that they’ll move smoothly from newcomer to newcomer? Teams, like data, are not self organizing and human error is inevitable.

Here are some of the consequences of an ad hoc approach to describing your data:

- Data dictionaries that only mean something to a small group

- Outdated, and therefore, incomplete data dictionaries

- Inaccurate entity definitions, which cause widespread confusion

- Naming conventions being used interchangeably - creating multiple definitions for a single entity

- Data which cannot be used across the whole business

- Future data teams bamboozled by the notes of their predecessors

- Communication barriers

- Poor outcomes with AI and advanced analytics (or none at all)

What is a Universal Data Language?

If our ‘random definitions’ look something like this:

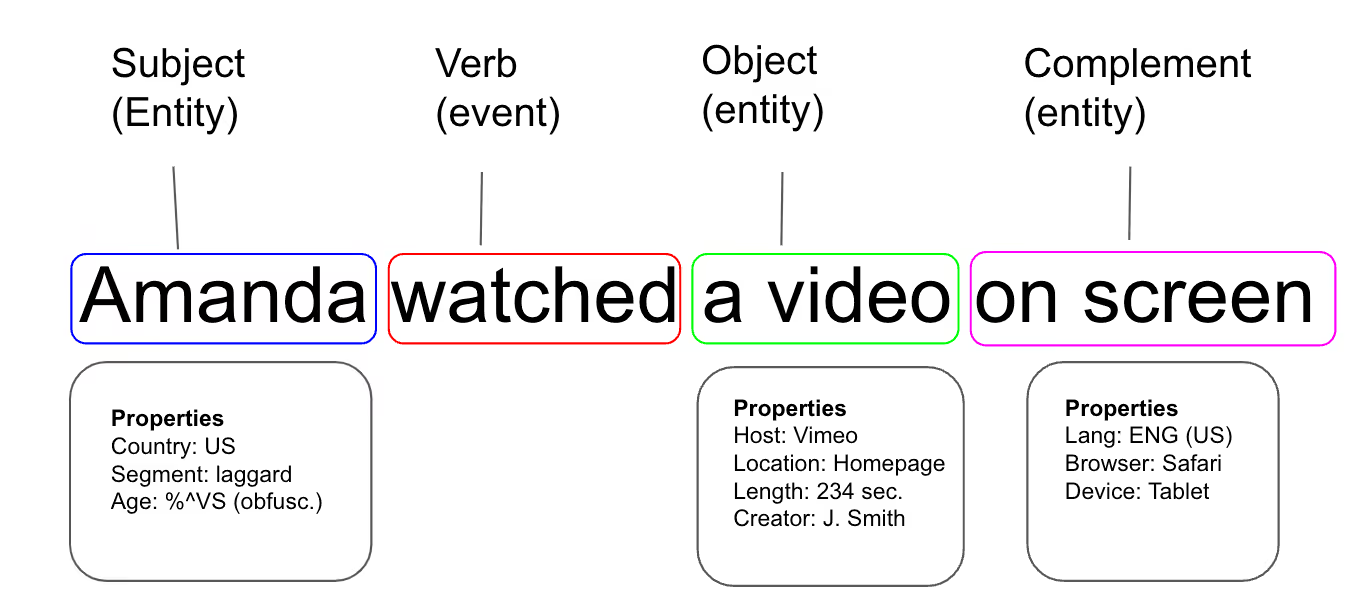

The Universal Data Language looks more like this:

And not because you tidied up in the garage – that never happens; it’s because you had a meeting to agree where all the tools went ahead of time and enforced it in the toolbox itself with grooves shaped perfectly for each object. This means that a wrench never gets put in a screwdriver slot.

To avoid laboring the metaphor, let’s talk data.

Behavioral events are made up of the event itself (a verb like ‘click’) and various entities and properties (the person clicking, the object being clicked and any contextual information about the person or the object).

A data language describes these events, and is comprised of a vocabulary and a grammar specific to your business context.

A data vocabulary: how you define the properties within your events and entities. It is best enforced through schema validation before storage. The schema is like the custom grooves in your toolbox, blocking data which doesn’t meet the specifications from entering your storage location.

A data grammar: the sum total of the rules you define before creating your data sets. For example, with every event you may only want one company entity and one user entity (obvious to humans but not for machines). Your grammar can be enforced through automated testing when generating the data, which holds data to the same standards as software.

Another crucial part of your data language is schema management – essentially your dictionary. Schemas should be easily discoverable and versioned to control for backwards compatibility.

Check out the open source tool 'Iglu' to learn more about schema management

The result is that the data which passes validation and lands in your warehouse is ready for advanced analytics and AI without cleaning or wrangling. The modular structure of the data with unlimited custom entities per event also means it is highly personalized and descriptive.

The benefits of this are so vast they barely need to be spelled out (but we will anyway):

- More data reaches production

- Projects see completion

- Teams are more motivated

- More time to create novel data strategies

- Extra time can be put into iterating on data applications

- Data scientists don't have to be data cleaners

- Better data ROI

- And so on...

How parts of a sentence can be mapped to events, entities and properties

With our shared language we can add more entities and properties to the power of n – city, product activation status, user intent, in addition to different levels of aggregation (event level, session level or user level).

All this can be achieved when you have a deep understanding of what each event means and how it is created, but the real impact comes after we create these tables.

Take a technical look at the structure of Snowplow event data

How most data analytics platforms oversimplify their event grammars

A crucial detail missed by most platforms, such as CDPs and packaged analytics tools, is that a given entity can shift from being a subject to an object or complement, as well as existing in various events, depending on the situation and the internal logic of your digital product.

For example, it is often falsely assumed that the end user is the subject in all cases.

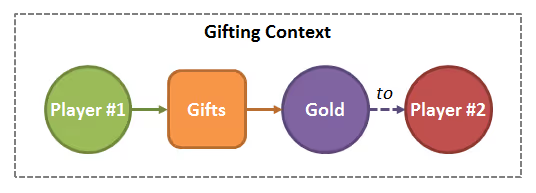

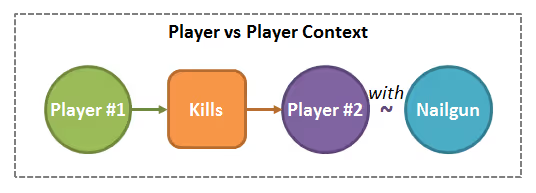

Here’s an example from the gaming industry:

- User #1 gifts gold to user #2

- User #2 kills user #3

- User #2 levels up

In a gifting screen within the game (Context), the player (Subject) gifts (Verb) some gold (Direct Object) to another player (Indirect Object).

During a two-player skirmish (Context), the first player (Subject) kills (Verb) the second player (Direct Object) using a nailgun (Prepositional Object). This illustrates how your end-users can be the Object of events, not just their Subjects.

Here we illustrate a 'reflexive' verb (a verb for which the subject and object are the same): through grinding (Context), the player (Subject) levels herself up (verb, reflexive).

As we can see from this, the same entities will be found as Subject, Direct Object, Indirect Object or Prepositional Object depending on the event.

A Universal Data Language does not make this assumption, and rather treats entities as something that can occupy various positions in the grammar of a given event.

This gives our data language one of the defining and essential qualities of a real language: flexibility.

Building data applications with Universal Data Language

A Universal Data Language helps to create powerful data applications, from real-time recommendation engines for editorial analytics to personalization engines for e-commerce, and marketing attribution.

AI and advanced analytics are highly data intensive, and the data you feed these apps needs to have certain attributes in order to be effective.

The data must be:

- Reliable: this is the freshness of data. When meaning is hardcoded from UDL, the low latency improves the effectiveness of data applications.

- Accurate: i.e., the data describes exactly what is attempts to describe: 400 page views means 400 page views. Having a tightly enforced tracking plan means the data is far more accurate.

- Explainable: the machine and human readable nature of JSON schemas means that data can be explained between teams and between systems. (Snowplow has also built a tracking catalog using common English in our UI, so less technical team members can see exactly what is being tracked. This is a further step towards full explainability)

- Predictive: the modular structure of the data with unlimited entities and properties, as well as the enforced quality of the resulting data means AI and machine learning model can make better predictions.

- Compliant: data which is tightly enforced and defined is by definition highly compliant. It is auditable and because you know exactly what each part of your event means, you can control access, storage locations, and anything else necessary for local compliance.

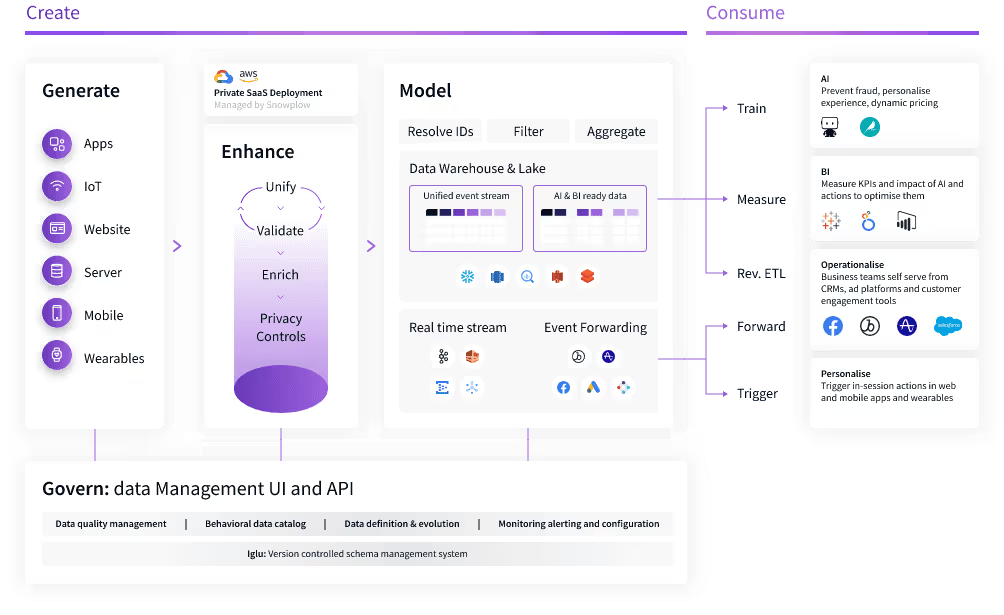

Use Snowplow to power your AI and advanced analytics

Snowplow was founded with the belief that data teams should spend their time innovating, not extracting and wrangling behavioral data from CDP’s or analytics platforms.

As the leader in Data Creation, Snowplow empowers more than 10,000 organizations, including Strava, Autotrader, and Software.com to purposefully create behavioral data to unlock transformative AI and advanced analytics directly from their warehouse, lake or in a real-time stream.

With our open-core technology, teams can generate, govern and model high-quality, granular behavioral data within their own cloud. Equipped with AI and BI-ready, teams can focus on creating pioneering data products across their business.