What is Clickstream Data? Definition & Guide for the AI Era

Clickstream data has always told you how your customers behave online. Every page view, every abandoned shopping cart, every scroll through a product listing leaves a trace. Combine those traces and you get a picture of user behavior across your entire online journey.

But here's the thing. The definition of "user" is expanding. Fast. According to Imperva's 2025 Bad Bot Report, automated traffic has now surpassed human activity, accounting for 51% of all web traffic. And the trajectory is only upward. AI agents, bots, and crawlers are all generating clickstream data across your digital estate right now, whether you're collecting it deliberately or not.

At Snowplow, we process over one trillion events per month across more than two million websites and applications. Many of our customers were already concerned about the growing impact of bots on their analytics before AI agents even arrived. They want to distinguish bots from humans, and then distinguish bots from one another. This guide explains what clickstream data is, how it has evolved, and why modern data teams must now account for both human and AI behavior.

What is Clickstream Data?

Clickstream data is a chronological record of every action a user takes within a digital experience. That could be a website, mobile app, desktop application, or any other digital platform. It captures those actions in real time, as they happen.

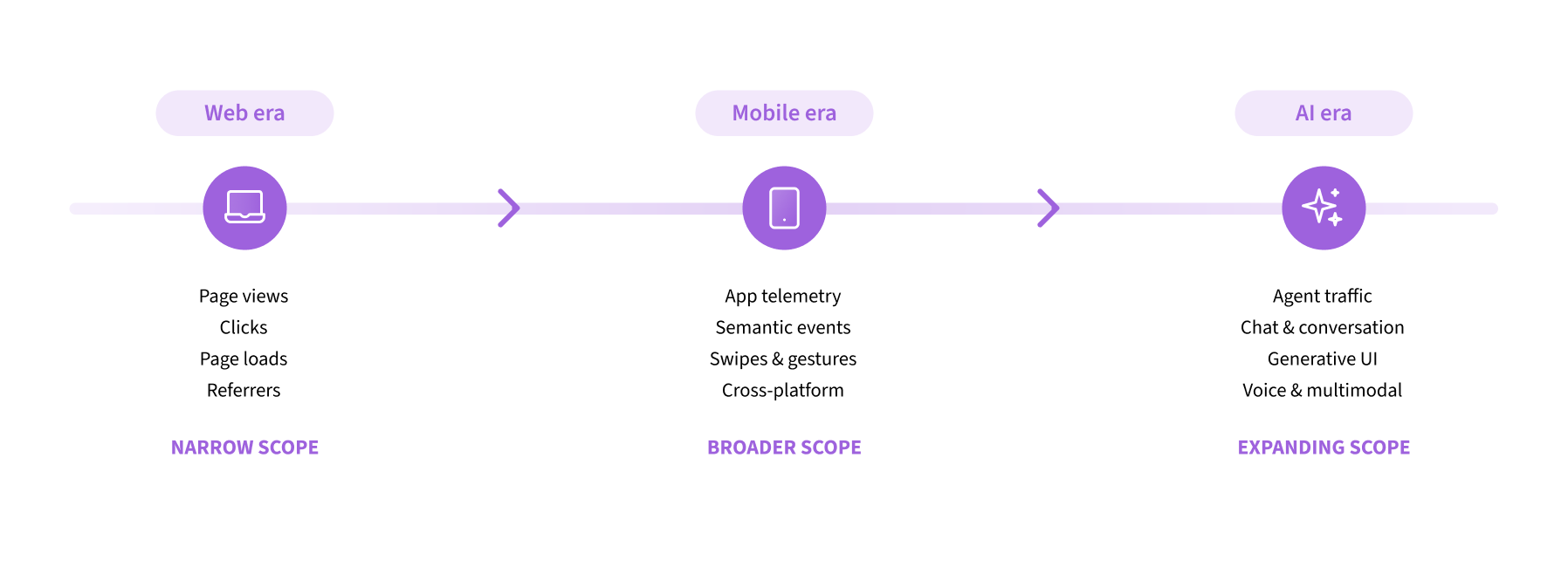

The term goes back to the early web, when the only things you could really do were load a page, scroll down it, and click a link. So "clickstream" described exactly that: a stream of clicks.

Then mobile apps expanded the scope. Swipes, pinches, taps. Interaction types that didn't exist in a desktop browser. At the same time, the industry moved from raw interaction data toward semantic events. So rather than recording "a user clicked a button," modern clickstream data started capturing behaviors like "a customer added a product to their basket." Context and meaning became part of the data.

Now AI agents are driving the next expansion. And it's bigger than most people realize. This isn't just about new users showing up in your analytics. It's a widening of what clickstream data actually contains.

Consider two examples. First, concierge agents are appearing inside traditional digital experiences. Your customers aren't just clicking around your site anymore. They're talking to an AI assistant that's helping them accomplish something. That conversational data tells you directly who the customer is and what they're trying to do. Two things that were historically very difficult to infer from clickstream data alone.

Second, generative UI means agents can dynamically create digital experiences that users then engage with. Tools like count.co and gamma.app blend chat and traditional UX elements seamlessly. The actions people take inside these interfaces are all part of the clickstream, but they look nothing like traditional page views and clicks.

This expansion will continue. Voice is already a viable input method. Smart glasses could mean tracking eye movements. The term "clickstream" will gradually lose its meaning as clicking becomes a smaller share of what the data actually captures, and more of it describes the totality of human and AI interactions across every channel.

Examples of Clickstream Data

Traditional human-generated clickstream data includes: entry points, page views and time on page, scroll depth, button and CTA clicks, items added to or removed from shopping carts, cart abandonment events, form submissions, checkout completions, and exit points.

Collecting clickstream data today also means capturing bot activity: server log requests from known crawler user-agents (Googlebot, Bingbot), high-frequency page visits with no scroll depth or dwell time, and sessions from cloud provider IP ranges rather than consumer ISPs.

And then there's AI agent activity, which behaves differently from both: requests from LLM crawlers (GPTBot, PerplexityBot, ClaudeBot) indexing content for AI training, referral sessions from AI platforms like ChatGPT and Perplexity, sessions where engagement signals drop abruptly mid-session (indicating an agentic browser has taken over), and conversational interactions with an embedded AI assistant, capturing customer intent directly.

Why Clickstream Data Matters More Than Ever

Clickstream data has always mattered for understanding customer trends and informing marketing and product strategies. But digital experiences are being reshaped by AI agents. That changes what's at stake.

Customers are using AI agents to act on their behalf. Enterprises are deploying agents to deliver better experiences. We're seeing entirely new interaction patterns: customer-to-agent-to-enterprise, even customer-to-agent-to-agent-to-enterprise. Clickstream data is foundational to navigating all of it.

Your metrics are only as clean as your data. If your analytical tools can't distinguish human visitors from bots and AI agents, your core metrics are unreliable. Inflated bounce rates. Suppressed conversion rates. A/B tests and ML models trained on mixed behavioral data, learning from the wrong signals. Traditional bot detection relies on known user-agent strings and IP blocklists. Agentic browsers may present as regular Chrome sessions. The problem is now significantly harder.

Understand what your customers actually came to do. Clean clickstream data, especially when it includes conversational data from on-site or in-app AI agents, gives you a direct signal of customer intent. If a customer types "I need a return label for an order placed last Tuesday" into a chat agent, you know exactly what they came to do. That data driven insight is far richer than anything you could infer from clicks alone.

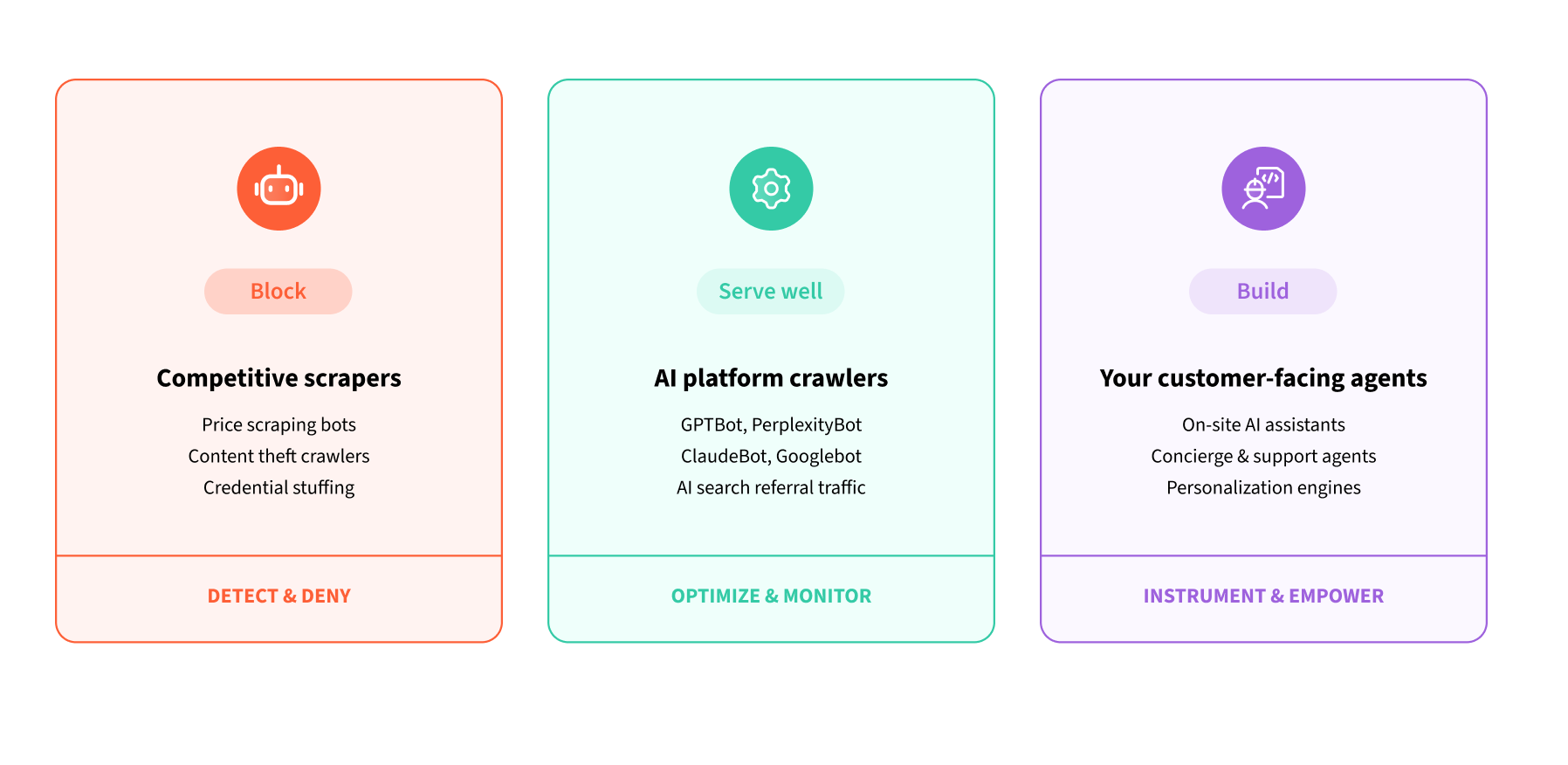

Manage agents strategically. Enterprises face three distinct situations with non-human traffic. Some agents are competitive scrapers you'll want to block. Others, like GPTBot or PerplexityBot, are indexing your content for AI-generated answers and driving real referral traffic. You'll want to serve those well. And then there are your own customer-facing agents, which need rich, real-time clickstream context to deliver personalized experiences. Without visibility across all three, you can't make these decisions deliberately.

Optimize for AI discovery. AI platforms are becoming a significant source of referral traffic. Understanding which platforms crawl your content, how frequently, and which pages they prioritize lets you optimize for AI discoverability, not just traditional search.

How is Clickstream Data Collected?

Clickstream data is collected across every digital platform: websites, mobile apps, desktop apps, TV apps, and more. The right approach instruments tracking across all layers of the application stack.

Client-side SDKs. JavaScript SDKs embedded in web pages, and equivalent SDKs for mobile and desktop platforms, capture user interactions in real time. These remain essential for rich human behavioral signals like mouse movement, scroll depth, and interaction timing, which help distinguish human sessions from automated ones.

Server-side tracking. Server-side tracking captures events from the application backend, independent of the browser or app. It's more reliable, unaffected by ad blockers, browser privacy settings, or JavaScript execution failures, and has been best practice long before AI agents became a consideration.

CDN and server log ingestion. Server logs record every HTTP request to your infrastructure, including requests from AI crawlers that never execute JavaScript. Ingesting CDN logs from providers like Cloudflare, Vercel, and AWS CloudFront surfaces this hidden layer of traffic, data showing you exactly who (and what) is visiting your properties.

The unified approach. Siloing these streams creates blind spots. The right architecture routes all three into a single data warehouse, where human and agent behavior can be compared, segmented, and modeled together. You can aggregate data across all sources to build a single, accurate picture of online activities across your digital properties.

From Detection to Real-Time Response

Identifying AI agents in your clickstream data is only the first step. The real value is acting on that knowledge in real time, while the agent is still on your site.

Real-time behavioral scoring uses clickstream signals like mouse movement, scroll velocity, session pacing, and engagement depth to classify a session as human or agent as it unfolds. That classification can trigger an adaptive response: serving a condensed page to a detected agent, prompting human re-engagement when an agentic browser takes over, or surfacing a brand-owned AI assistant when a user returns to active control.

Traditional bot management is defensive: block, filter, exclude. Real-time detection lets you play offense. You treat agents as a distinct class of visitor and decide deliberately how to respond.

How Snowplow Collects Clickstream Data

We built Snowplow for comprehensive, multi-source clickstream data collection. First-party tracking SDKs for web, mobile, and server-side platforms. Built-in enrichments that classify user agents, IP addresses, and autonomous systems at ingestion, flagging known crawlers and AI agents as data enters the pipeline. CDN and server log integrations with Cloudflare, Vercel, and AWS CloudFront to surface traffic that never executes JavaScript. And Snowplow Signals for real-time behavioral profiling, detecting agent activity, and powering adaptive agentic decisioning.

We're also developing Signals Agents. As the scope of clickstream data keeps expanding, more and more of it will be unstructured: chat transcripts, voice interactions, generative UI sessions. Signals Agents sit inside the pipeline itself, turning that unstructured data into structured, aggregated signal in real time, optimized for customer-facing agent experiences. Without agents in the pipeline, teams simply won't be able to keep up with the volume and variety of what clickstream data is becoming.

Want to see how we handle clickstream data for the agentic era? Request a demo and speak with our team.

Clickstream Data: A Definition That Keeps Expanding

Clickstream data started as a record of clicks on web pages. It expanded to encompass mobile apps and semantic events. Now it's expanding again to include AI agents acting on behalf of users, conversational interactions with on-site assistants, and the dynamic interfaces of generative UI.

Today, most organizations treat their support data (Zendesk, Intercom) as completely separate from their clickstream data. That distinction won't last. As more customer interactions happen through AI agents, chat, and voice, those data streams will merge. The real-time pipelines that process clickstream data today will likely process all of it tomorrow.

Each expansion has made clickstream data more valuable and more complex. The teams that stay ahead are those that treat each new expansion not as noise to filter out, but as intelligence to understand and act on.