Not All AI Agents Are the Same — and It Matters for Your Data Strategy

AI agents are moving fast in the world of customer experience. The way users interact with our brands is changing dramatically and quickly. With so many new ways for customers to engage with your brand and your digital estate, the question becomes: how should businesses focus on agentic experiences? And more specifically, how should they think about AI agent analytics for each type of agent?

This post introduces a classification framework to help answer that question, and is the first in a two part series on AI agents, and how we’re thinking about how they serve end users in an AI-first world.

The current industry conversation on AI Agents

Currently, in early 2026, the majority of conversations around AI and agentic capabilities falls into two camps:

- Marketing/AIO and visibility (the business perspective)

- DevOps/LLM-Ops (the technical perspective)

Marketing, SEO, and AI visibility conversations focus on the presence of AI bots crawling and indexing your content, users’ personal AI apps pulling information from websites, and agentic commerce systems allowing agents to perform transactions. These are AI interactions not controlled by a brand directly, and instead are in the hands of the end user.

The DevOps or LLM-Ops view is from the traditional logging and monitoring perspective, and gaining observability into the behaviour of in-house agentic systems. Concerns such as up-time, token usage, latency, cost management and tracing are of high importance as DevOps/LLM-Ops professionals work to ensure these new business applications are running as smoothly and as efficiently as possible. There are dedicated agent observability platforms, such as LangFuse and Arize, and they allow LLM-Ops teams to monitor all of the above.

“Agent Observability tells you if your agent is working. AI Agent Analytics tells you if your agent is working for your customers.”

Despite this focus from businesses, there is currently very little discussion on another area of AI/agentic development: agentic applications that are built and owned by brands, and embedded directly into their products.

AI Agents

“An AI agent is an LLM with agency. It has the ability to reason about its own actions, make decisions and (crucially) take actions in the real world.”

There is a distinction to be made between “systems that respond” and “systems that act”.

The infrastructure for building agentic applications has accelerated rapidly through 2025 and 2026, and businesses are now utilizing agents to build all kinds of new applications to serve business users and customers, instead of just call-and-response LLM chatbot interfaces.

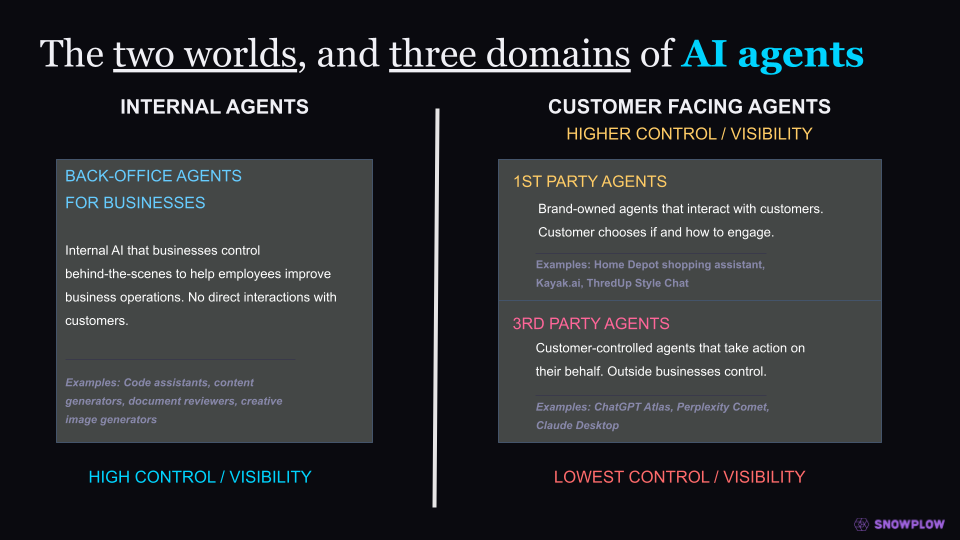

I want to now get specific on how we classify different kinds of agentic applications common now in 2026, because not all agents are built the same.

A taxonomy of AI Agents

Now that we know what an agent is, let’s classify them so we can clearly delineate their functions.

3rd Party Agents - Customer-Side Agents

3rd party agents are agentic systems used and controlled by the end user. The easiest example is something like Claude Desktop or ChatGPT, that users use on their own computer, that can take agentic actions on their behalf. 3rd party agents also cover things like AI/agentic browsers, such as ChatGPT Atlas and Perplexity’s Comet browser, as well as AI crawlers triggered by these agents.

The main concept to understand with 3rd party agents is that these are outside of the control of the organization. The end user and the LLM/AI app developer are in control. They choose the prompts, the tools the agent has access to, and instruct the agent on how the result should look and how it should behave. Given the end user is the one in control, this also results in lower visibility into what’s going on, from the brand’s perspective.

Back-Office Agents - Internal Agents

Companies are investing heavily in developing agentic applications for their own staff to benefit from. According to a 2025 survey from MIT Sloan Management Review and BCG, 35% of organizations are already using agentic AI, with another 44% planning to deploy it soon. Their use cases are wide and varying, from agents for creating marketing copy, to reviewing legal contracts, natural language knowledge base agents and code assistants. These give the brand the highest level of control possible, and these are not ever surfaced to end customers.

1st Party Agents - Brand-Side Agents

1st party agents are owned and controlled by the brand themselves. These customer facing agentic experiences are embedded in the brand’s product experience,directly into the website or app that customers interact with.

These 1st party agents provide the brand the highest degree of control over what the agent does and how it behaves. The agentic app developer at the business chooses which tools and APIs the agent has access to, the UI and UX of the agentic experience and how it integrates with the wider product/s.

This elevated degree of control is the key differentiating factor that brands now have, allowing them to augment and enrich their entire customer experience (CX) with agentic capabilities.

Of all the three kinds of agents outlined above, the first two (3rd party agents and back-office agents) are the two that have gained the majority of conversation and mind-share in the last year or so. Whereas the 1st party agents are still extremely new in the marketplace - but potentially the most disruptive class of agents.

Why customer-facing, 3rd party agents are mostly a familiar problem

While agents controlled by end customers accessing your products is genuinely a novel problem-space, I believe there are a couple of key reasons why this is the area that has received the most focus from the market right now:

- These have moved the fastest

- They are a more familiar problem

The big AI research labs (Anthropic and OpenAI, along with Google and others) have been able to quickly develop solutions to put in end users’ hands to allow them to use agents to perform tasks on their behalf.

The second reason is down to the nature of how agents behave when accessing your brand’s website and products. They still make HTTP requests, access web pages, and trigger behavioral events etc. And after all, agents are bots - very smart bots, but still bots. So in a lot of ways, this is very similar to problems we’ve addressed in digital analytics for a very long time.

“The challenges around understanding which of your data is representing human behavior vs an agent’s behavior is new, but feels familiar.”

The key questions of “who is visiting our website?”, “how are end users discovering our content?” and “what are they doing when they land on the site?” are VERY familiar questions to ask, we just now have to shift the lens of how we look at the problem.

The existing tools need significant updating and tweaking to be able to help businesses understand this new wave of agentic traffic, but most people in this space feel comfortable (for the most part) in how they can conceptually tackle this problem.

From an AI agent analytics perspective, 1st party brand owned agents require an entirely different way of thinking about the core problem

Branded agents on the other hand, require an entirely new way of thinking. Below are some reasons why we need to rethink digital analytics when it comes to 1st party agents.

1. The non-linear interaction model

A lot of traditional web/digital analytics is all about funnels. A purchase funnel, a sign-up funnel, the digital marketing funnel etc. All of these are linear user journeys with predictable, known steps and a known order, carefully crafted by UX and CX professionals for years.

An agentic experience essentially rips up that rule book.

Agents are non-deterministic probability machines, and depending on the model and the tools the model has access to, can potentially go in any number of directions in a somewhat unpredictable manner (intentionally so in most cases).

The agent might search for products, or look up support tickets, or add a product to the user’s cart, or look up stock levels, or make a booking. Or it might ask for more clarifying information, or refer the user to a documentation page, or push back and say something isn’t possible. This model is difficult to reason about for analysts, or analyze and optimize for.

2. PII handling

There are numerous studies showing that end users seem very comfortable with sharing very personal information with an AI agent. Obviously not every brand will be building an AI with the aim of extracting deeply personal information from their users (some might, like therapeutic services) but it does highlight that there is now PII everywhere when it comes to AI agent interactions, and this needs very careful consideration as to how it is handled by the brand.

3. The behavior context gap

Most of the agentic applications currently out in the wild today suffer from the “blank page” problem.

They require the human user to explicitly type out all the contextual information to the agent. Implicit context, such as what pages they’ve been looking at, and what actions they’ve been performing are not available to the agent.

This context is potentially vital. Imagine a user journey where a user is trying to understand why their payment isn’t going through and opening an agentic support chatbot system, and the agent has no visibility of the repeated attempts to make that payment and repeatedly refreshing the checkout page.

As a result, the user has to provide all the context to the agent manually, and probably go through a mini-interview in order to get that information. That’s a broken experience, and it’s a data problem at its core.

4. The underlying model behavior shifting beneath you

A very familiar feeling for those who have worked in SEO for a long time is how algorithms you rely on, owned by a 3rd party, suddenly change in behavior without warning.

In the search engine space, it’s Google’s proprietary search algorithm changing how it values certain signals from websites, meaning your website rankings could change with material impacts on your company’s bottom line.

LLM and AI algorithms built by the biggest research labs (including Google) find themselves in a very similar scenario. If you build a 1st party branded agent powered by Claude, GPT or Gemini, and you get it behaving as you like, it’s very possible that one day the agent could well start reacting and behaving differently in-front of your customers.

If this happens, being able to have real time insights into the model’s reasoning in-front of customers is critical to ensuring your CX remains as unaffected as possible.

The state of play with 1st party, customer facing agents

This is still very much an emerging field in tech and analytics. The technology is new and maturing, and in some areas simply nascent. Because of this, it’s fair to be honest and say that there are still no real best practices or standards to be followed. The most promising approaches we’re starting to see include:

- Analyzing raw chat log transcriptions

- Embedding tracking functions into tool calls

- Adapting traditional client-side and server-side tracking to a 1st party agent

- Instructing agents to be self-aware of tracking and instrument their own tracking of its behavior

- A hybrid approach of multiple methods

There are no concrete playbooks or standards yet. But the businesses who are already experimenting with 1st party agents, and multi-agent systems (MAS), and thinking about their event models and tracking designs alongside the build, will be very well placed as the technology matures.

What comes next?

The taxonomy of 1st party and 3rd party agents, customer facing agents vs internal agents etc, helps us all frame the conversation around how different kinds of agents are affecting business operations, and frame the problem of understanding agentic behaviors.

But this does not solve the problem. Moving forward as agentic traffic and 1st party agents continue to grow in prominence, data teams and technology functions need a practical path forward. That path forward will be to treat a 1st party agent as a first-class entity in the behavioral data model, not something that can have behavioral data “bolted-on” as an afterthought.

In part two of this blog series, we’ll discuss in much more detail how we can go about gaining that insight into an agent’s behavior, the challenges of traditional approaches to behavioral tracking with agentic applications, and our proposed pattern for creating a robust behavioral data collection strategy and AI agent analytics strategy when it comes to 1st party agentic applications.

Appendix

Marketing/SEO and visibility

With consumers using AI to help them with almost all aspects of their daily lives (from cooking to wedding planning), they are therefore also using AI to research, discover and learn about different products and services. Therefore, brands are obviously very interested right now in how well they are “showing up” when users chat with an AI about whatever they offer. So when a user writes a prompt like “I’m planning a vacation to Greece, what are some good locations for a family holiday, particularly focusing on all-inclusive resorts with good kids facilities near the beach”, from a travel brand’s perspective, it’s critically important to be able to know if and when their brand is being surfaced by the AI to the user. This is very similar to the traditional SEO challenge. This field is more commonly being referred to as Artificial Intelligence Optimization (AIO) or AI Visibility. This area therefore covers things like:

- AI/agentic web crawlers, scraping content so that future iterations of the LLMs know and understand which brands are relevant, and that the agents can access content to pull that information into a conversation

- Embedded AI applications, such as ChatGPT’s Apps where users can interact with a brand directly in a personal AI app

- Agentic commerce systems, such as Google and Shopify’s Universal Commerce Protocol which allows agents to transact on behalf of users

DevOps/LLM-Ops

For companies building agentic systems in-house, engineers are very interested in monitoring the performance and up-time of those agentic systems - just like any other internal system they may be running. This is traditionally under the sphere of DevOps engineers. DevOps historically monitor things like up-time, CPU utilization, errors, latency etc. As a result a new field of Agent Observability has formed and LLM-Ops as a discipline has become more commonplace.

There are dedicated Agent Observability platforms, such as LangFuse and Arize, and as referenced in Part 1, they allow LLM-Ops professionals to monitor things like:

- Uptime

- Tracing

- Token consumption

- Latency

- Costs

These tools mean that engineers building agentic platforms for their businesses can ensure their internal users get the best experience possible, and allow them to iterate and improve their system’s capabilities.

Both these areas of focus are obviously crucial for businesses to understand, and it’s great to see dedicated software being developed to help answer these business questions and serve users better