What Is Agentic Analytics? A Guide for Data Leaders

“The tools work, just not well enough.”

That’s what we keep hearing from data leaders who’ve deployed AI-powered analytics agents. The early results were really exciting - moments where the agent delivered an insight that would’ve taken an analyst hours. But then it used the wrong table, misinterpreted a metric, and delivered a confidently wrong answer. One or two of those scenarios, and trust collapsed.

The problem isn’t the AI model. It’s the data foundation beneath it.

At Snowplow, we work with companies like Conde Nast, Strava, and HelloFresh, to make their behavioral data AI-ready. And across the market, we're seeing this pattern play out everywhere: organizations investing heavily in AI analytics tools, but struggling to get consistent results because the data foundation was never built to be understood by AI.

Why Agentic Analytics Matters Now

For three decades, organizations have been trying to become more data-driven. They invested heavily in warehouses, dashboards, and BI platforms. And yet the promise of self-serve analytics never fully materialized. The tools kept getting better, but the specialist skills required to use them never really went away. Ask most business users in product, marketing, or ecommerce teams, and they’ll probably tell you that they cannot get a reliable answer from their own data without filing a ticket with the analytics team.

That bottleneck has now become a direct cost. Not because you have the wrong people, but because your best people are stuck doing the wrong work. Too much of their time is currently being absorbed by data preparation that AI agents could handle, leaving less time for higher-order thinking such as framing questions, interpreting results, and advising the business.

Agentic Analytics, Defined

Agentic analytics is simply the use of AI agents to do data analysis. In practice, this spans three areas:

- Agents that help people across the business to understand what’s happening, what’s changed, and why, including measuring experiments, campaigns, and product changes

- Agents that build and maintain dashboards so teams have better visibility of their data

- Agents that work alongside other agents to perform autonomous tasks, like running and optimizing marketing campaigns in real time

Most organizations today rely on three layers of analytics capability. You have dashboards that give business users a pulse on performance and flag when something looks off. You have BI tools and pivot interfaces that let you interrogate data further, diagnosing issues by slicing across dimensions. And you have data analysts who use a broader toolkit, including statistical analysis and experimentation, to deep-dive on specific problems, identify root causes, and measure the impact of solutions.

Agentic analytics transforms all three of these capabilities. Business users can create their own dashboards because agents build them on demand, so no need to wait for your BI team. Agents can answer analytical questions directly, making it faster and easier than self-serving in a pivot table or briefing an analyst for a deep-dive. And agents can support autonomous workflows, working alongside other agents running campaigns, optimizing spend, or personalizing journeys using live data.

The momentum behind this shift is hard to miss. It’s not just the term that is gaining traction. Everyone is launching data agents. The BI vendors: Tableau has repositioned its entire platform around agentic analytics. The data platforms: Snowflake and Databricks are both shipping agent-native interfaces. And the semantic layer providers are racing to make their metadata consumable by agents.

The Four Levels of Agentic Analytics

Think of agentic analytics as a progression. It’s not a single capability. We broadly see four levels of maturity that describe where an organization sits on the journey from traditional analytics to fully autonomous decisioning. And critically, the further you move down the levels, the more context the agent needs to be successful.

At the first level, AI agents make everyone more productive at answering questions with data. For data analysts, this means spending less time composing SQL and more time thinking about what questions to ask. For business users, it means being empowered to explore data in ways that were previously only possible with analyst support, where you needed to fill a Jira ticket and wait for resource allocation. This doesn’t replace dashboards. You can still get the pulse of a business faster by glancing at a dashboard than waiting for an agent to compose a response. But agents unlock the long tail of questions that no one ever built a dashboard for.

Where agents start to outperform humans at Level 1, is monitoring. Humans are poor at watching systems around the clock. Agents can run 24/7, and when equipped with your behavioral data, they don’t just spot anomalies; they perform an initial analysis, develop and test hypotheses, and present findings to the business. So not just “your conversions dropped,” but “here’s what we think happened and why.”

At the second level, agents diagnose why something happened. So let’s say your conversions drop. The agent identifies exactly when the change occured. It looks across the data to find what else changed around that time. It slices by traffic source, conversion path, user type, and offer to understand where the impact is most pronounced. It then cross-references with recent product deployments or CMS changes. This is multi-step reasoning that would typically take a skilled analyst several hours. The agent does it in seconds.

At the third level, agents recommend what to do. Based on the diagnosis, the agent proposes specific actions whether that be to revert a deployment, reallocate budget to a higher-performing channel, or trigger a retention campaign for an at-risk segment. In this scenario, the human still approves and executes. But to get here, the agent needs more than data. It needs operational context. If the sales pipeline is dropping, the agent has to understand how the pipeline is generated and what levers the team has. If the conversion rate is falling, it needs visibility of CMS changes. It also needs context from outside the business. For example, if last month was Christmas, a January drop is expected, and therefore no alarm is triggered.

At the fourth level, agents act autonomously. The system identifies a problem, recommends a solution, and then executes it: adjusting the campaign bid, triggering the workflow, or updating the customer journey in real time. This where agentic analytics moves from reactive reporting to proactive, autonomous decisioning. We’re already seeing this in focused use cases where the data is well understood and business context is contained such as insurers automating claims processing, or marketers using AI decisioning to automate the writing and sending of emails and push notifications.

Most organizations pursuing agentic analytics today are doing Levels 1 and 2, but struggling to do them consistently. Quite often the conversational analytics works, just not reliably enough. Meanwhile, they’re experimenting with Levels 3 and 4 in limited, well-scoped use cases. The pattern across all these Levels is that you need context. And the further you mature, the more extensive the context needs to be. A strong data foundation is a precondition for the context. It’s the part that defines what the data means. And without it, even Level 1 breaks down.

The Context Challenge in Agentic Analytics

Organizations are pouring budget into AI-powered analytics tools, seeking two key outcomes. Genuine data democratization, where every business user has an AI analyst instead of waiting on an overstretched data team. And autonomous decision-making, where agents act on data in real time to optimize campaigns, trigger workflows, and personalize journeys.

This ambition is absolutely right. But the reality is that data teams find themselves annotating data, composing semantic layers, and struggling to keep those definitions up to date and complete. They're experimenting with limiting which tables the agent can access, researching evaluation frameworks, training colleagues to prompt the tools differently. These are all signs of a data foundation that was never built to be understood by AI. So the tools work, just not well enough.

Pretty much every analytics vendor is racing to build better agentic experiences. They're leveraging each new frontier model as they're released. They're building support for open semantic layers and workflows to help their customers populate those layers, because they know that's the fastest way to better agentic analytics.

But here’s the problem. These vendors generally don’t have visibility of how the data was created and processed before it arrived in the warehouse. They have no visibility of the business context in which that data was generated. So they leave it to their customers to build the semantic and context layer. Some have developed feedback loops where humans can thumbs-up or thumbs-down an agent’s analysis, using reinforcement learning so the agent improves over time. But it still leaves the customer to build the context. And that puts a significant burden on organizations.

An agent is only as intelligent as the context it has, no matter how sophisticated the underlying model. If you point a powerful reasoning model at warehouse tables with no definitions, metrics that mean different things across dashboards, and business logic that only lives in the heads of a few senior analysts, you will get confidently wrong answers. This is not an edge case either. According to Gartner, 63% of organizations either do not have or are not sure they have the right data management practices for AI.

In practice, there are three distinct challenges organizations face when providing agents with context:

- It’s hard to build all the relevant context on the data without a deep understanding of exactly how that data was created and processed before being exposed to the agent. This is where data collection and processing vendors are uniquely positioned. If you control the end-to-end flow from event creation through to the warehouse, you can provide this metadata into the semantic layer so you don’t have to build it manually.

- There’s a large body of business-specific context that the organization has to be responsible for: how pipeline is generated, what campaigns are running, what product changes shipped last week. Organizations need to think holistically about making more of their business legible to AI.

- All of this context changes over time. This isn’t a one-off exercise. You need context infrastructure and processes that keep it updated as your business, your data, and your products evolve

IDC’s FutureScape 2026 research quantifies what happens if you do not act: by 2027, companies that do not prioritize high-quality, AI-ready data will struggle to scale their agentic solutions, resulting in a 15% productivity loss. That 15% is not theoretical. It is the cumulative drag of every unreliable query, every abandoned pilot, and every analyst hour spent doing the work a well-contextualized agent should have handled.

What Makes Customer Data AI-Ready for Agentic Analytics?

For your AI agents to deliver reliable, trustworthy answers, your underlying data needs three properties.

First, the data must be structured in a way that is easy for the agent to work with. If the agent has to perform complex transformations to answer a question, it’s more likely to make mistakes. Data that arrives in clean, well-modeled, sessionized tables, with clearly defined metrics and dimensions, gives agents a reliable starting point.

Secondly, the data needs a semantic layer. This is a structured layer of metadata that sits alongside your data and describes what it means: how tables relate, what key business metrics are defined, and how they’re calculated. Without a semantic layer, your agents are just guessing. With one, they generate accurate SQL and interpret results in context. Snowflake’s research confirms this: its agentic semantic models improved text-to-SQL accuracy by more than 20% compared to agents without schema understanding.

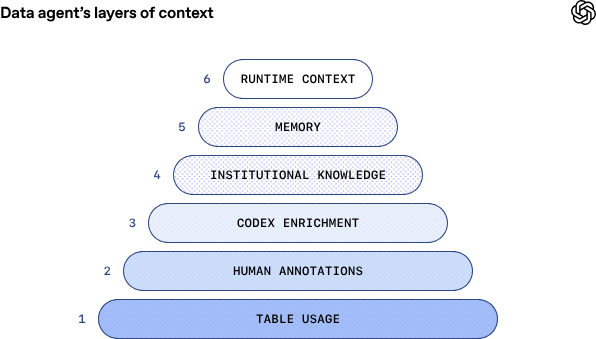

But the semantic layer is just one part of the context an agent needs. OpenAI’s engineering team, writing about their own internal data agent, outline a multi-layered context system that also includes table usage metadata (which tables are trusted and how they’re used in practice) and broader business context: what type of business this is, what drives it, what teams operate it, what targets and OKRs are in place, and what decisions have been taken and when. The semantic layer sits in the middle of that stack. Below it, you need granular metadata on how the data was created and processed. Above it, you need organizational knowledge that only the business can provide.

Thirdly, and this is where most implementations stall, the semantic layer should not be a manual, post-hoc exercise. The most effective approach is to auto-generate semantic definitions directly from the event tracking plan or data model, so context engineering does not become yet another bottleneck for the data team. This matters because the context problem is never fully solved. No vendor can credibly claim to provide all the context an agent needs. The semantic layer is one critical component among many. Your aim should be to minimize the manual work required on the data you control, and deliver the best possible starting point from day one. That way your data team can focus on extending context rather than building it from scratch.

How to Break the Bottleneck: A Practical Path to Agentic Analytics

If you’re investing in AI analytics tools and not seeing consistent results, there are two things to focus on: well-structured data so the agent doesn’t have to work too hard to wrangle it, and sufficient context so it understands what the data means.

Start by evaluating the quality and structure of your customer behavioral data. Can an agent reason over it directly, or does it need to perform complex transformations first? Clean, well-modeled tables with clearly defined metrics and dimensions give agents a reliable starting point. If your data requires significant post-processing before it’s usable, that’s your first bottleneck.

Next, assess your context. The three layers described in the previous section - table usage metadata, semantic definitions, and business context - each need attention. If your data provider controls the end-to-end pipeline, the first two can largely be automated. The business context layer is newer and harder, but can be developed iteratively. The key question is: how much of this context is your team building manually, and how much is delivered out of the box.

Once you have well-structured data and a semantic layer in place, you can choose the agent platform that matches your data stack. Snowflake Intelligence, Databricks Genie, and similar tools from major cloud data platforms are designed to work within your existing security and governance model. Keeping your behavioral data within the platform where access is already managed reduces risk and accelerates time to value.

Finally, start piloting with a focused use case. Pick a single analytical workflow, like customer conversion analysis or campaign performance monitoring, where you can measure time-to-insight before and after. You can use the pilot to build organizational trust in agentic analytics before scaling. The companies seeing the highest ROI are not those that rolled out agents everywhere at once. They’re almost always the ones that got the data structure and context right first, proved the value, and expanded from there.

Getting Started with Agentic Analytics

The window to build your data foundation advantage is rapidly narrowing, and the cost of waiting is compounding. Every month your agents lack context, your AI tools underdeliver, your analysts remain buried in prep work, and your business users cannot trust the outputs enough to act on them.

The question is no longer whether agentic analytics will reshape how your organization uses data to succeed. It’s whether your data foundation will be ready when you are.

The bottleneck has never been the agent. It has always been the context.

Want to see how Snowplow delivers AI-ready behavioral data to your data platform for agentic analytics? Talk to our team about building your data foundation for Snowflake Intelligence, Databricks Genie, and the next generation of AI-powered analytics. Contact us.

FAQs

What is agentic analytics?

Agentic analytics refers to the use of AI agents to query data, diagnose problems and generate insights alongside human analysts and business users. Instead of relying solely on dashboards and manual analysis, AI agents can interpret data, explain why changes occurred, and recommend actions based on what they observe.

This approach builds on earlier analytics paradigms such as business intelligence and augmented analytics, but allows AI agents to perform multi-step reasoning and automate analytical workflows.

Why do AI agents struggle with enterprise data?

AI agents often struggle with enterprise data because most organizational datasets lack the context needed to interpret business metrics correctly. Definitions for metrics, table relationships and business logic are frequently scattered across documentation, dashboards and individual analysts’ knowledge.

Without a clear semantic understanding of the data, AI agents may generate queries that appear valid but produce misleading results.

What data foundation is required for agentic analytics?

Agentic analytics requires data that is structured, enriched and supported by clear semantic definitions. In practice for customer and user data, this means capturing event-level behavioral data, defining consistent business metrics and documenting relationships between datasets.

When this context exists, AI agents can interpret data more reliably and generate accurate insights.

Will agentic analytics tools hallucinate?

AI agents can produce incorrect results when they lack sufficient context about the underlying data or business logic. Large language models are good at generating SQL queries, but they may reference incorrect columns or misinterpret metrics if the schema is unclear.

Providing structured data and a strong semantic layer significantly reduces the risk of these errors.

Why do AI agents need a semantic layer for analytics?

A semantic layer defines how datasets relate to each other and how key business metrics are calculated. It provides the context AI agents need to interpret data and generate accurate queries.

Without a semantic layer, agents may produce technically valid queries that still return misleading results because the business logic behind the data is unclear.

Do companies need to replace their BI tools to adopt agentic analytics?

Most organizations do not need to replace their BI tools to adopt agentic analytics. Dashboards and reporting platforms can continue to serve monitoring and governance needs.

Agentic analytics typically adds a conversational interface where AI agents analyze data and surface insights, allowing more people in the organization to access insights without writing SQL or building dashboards.