Real-Time Editorial Analytics with Snowplow and ClickHouse: A New Solution Accelerator for Media Publishers

Most editorial analytics stacks follow the same pattern: collect events, load them into a warehouse on a schedule, run dbt models overnight, surface results in a BI tool the next morning. It works fine for historical reporting. But when an article is breaking and you need to know right now which headline variation is driving engagement, or which ad placement is converting on a trending story. Batch pipelines can't help you.

This is the gap we set out to close with our latest solution accelerator: Real-Time Editorial Analytics with ClickHouse. Built in collaboration with the ClickHouse team, this accelerator is a complete, deployable reference implementation. It includes a media publisher site, a Snowplow streaming pipeline, and a ClickHouse-powered dashboard that you can run locally with a single docker-compose up.

The accelerator is designed for data engineers and developers at media and publishing companies who want to see what real-time editorial intelligence actually looks like in practice, end to end, without weeks of integration work.

What you're actually building

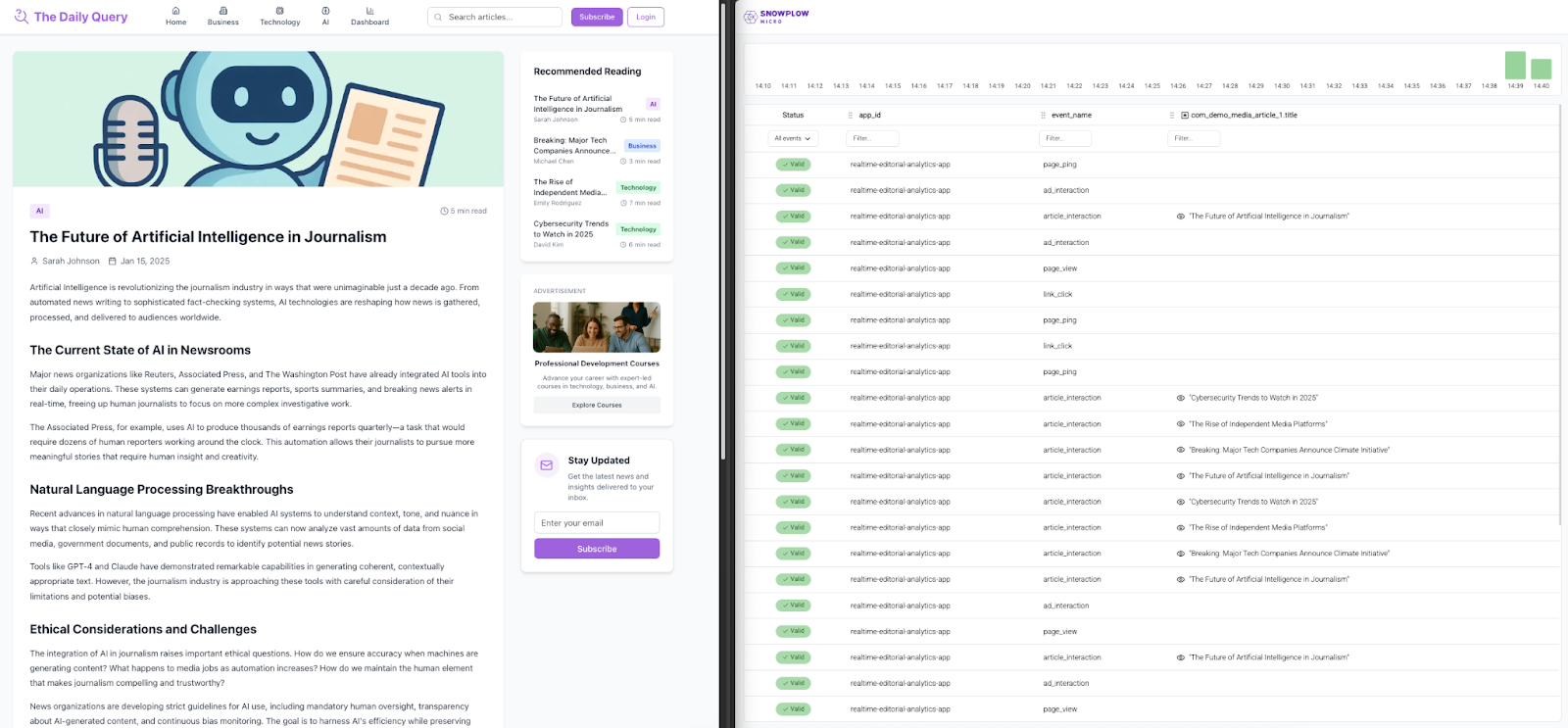

The accelerator ships as a working application called "The Daily Query" — a Next.js, mock publisher site with articles and advertisements. It's instrumented with Snowplow tracking, connected to a streaming pipeline, and backed by a ClickHouse Cloud instance that powers a live editorial dashboard. The entire stack runs in Docker.

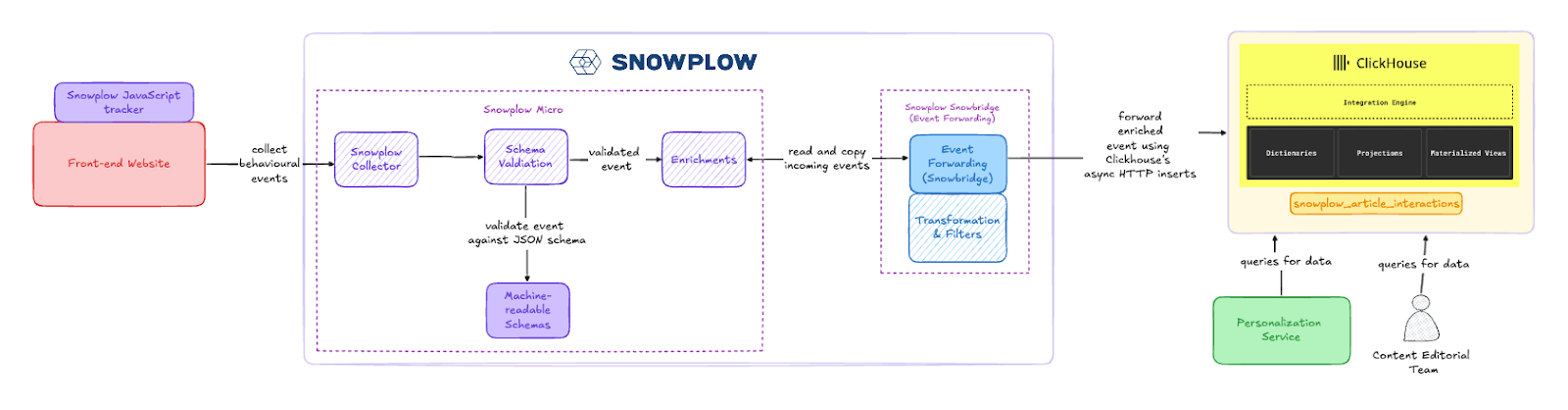

Here's how the components fit together:

Next.js Web Application ("The Daily Query"): The publisher site tracks reader behavior using Snowplow's JavaScript tracker, configured via Snowtype for type-safe event definitions. Instead of generic page views, the tracker captures typed self-describing events — article_interaction, ad_interaction, page_view, and page_ping — using custom schemas like com_demo_media_article_interaction and com_demo_ad_interaction. This gives you granular, structured data about how readers engage with content and ads, not just that they visited a page.

Snowplow Micro: A lightweight, locally-runnable version of the Snowplow pipeline. Micro collects events, performs schema validation, enriches the data, and passes everything downstream to Snowbridge. You can open the Micro UI to inspect every event in real time — filtering by event name, article title, interaction type, and any other dimension you're collecting.

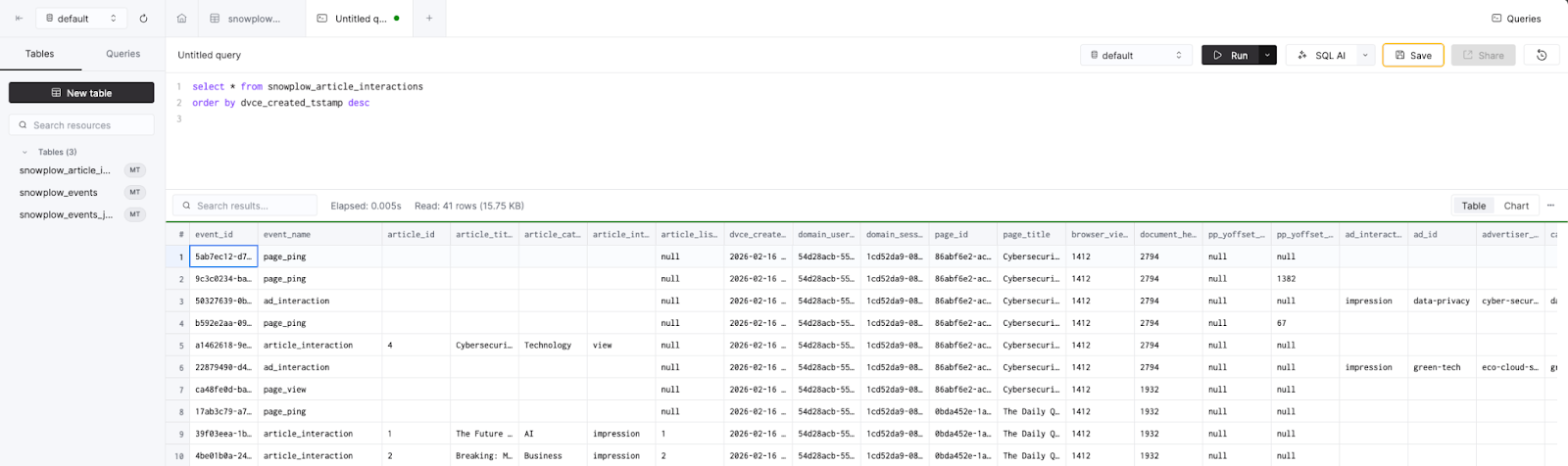

Snowplow Event Forwarding: Utilizing its Snowbridge tool underneath the hood, Snowplow can replicate streams of data of any type to external destinations. It filters the enriched event stream to forward only the relevant subset of events and dimensions, then lands them in a single snowplow_article_interactions table in ClickHouse via its HTTP interface. No Kafka, no intermediate message broker. Just a direct, low-latency connection that keeps the architecture simple.

ClickHouse Cloud: Each enriched event is stored as an individual row in ClickHouse, immediately queryable with standard SQL. ClickHouse's columnar storage and vectorized query engine make it possible to aggregate across millions of events with sub-second response times. That’s exactly what you need when editorial teams are refreshing dashboards every few minutes during a breaking story.

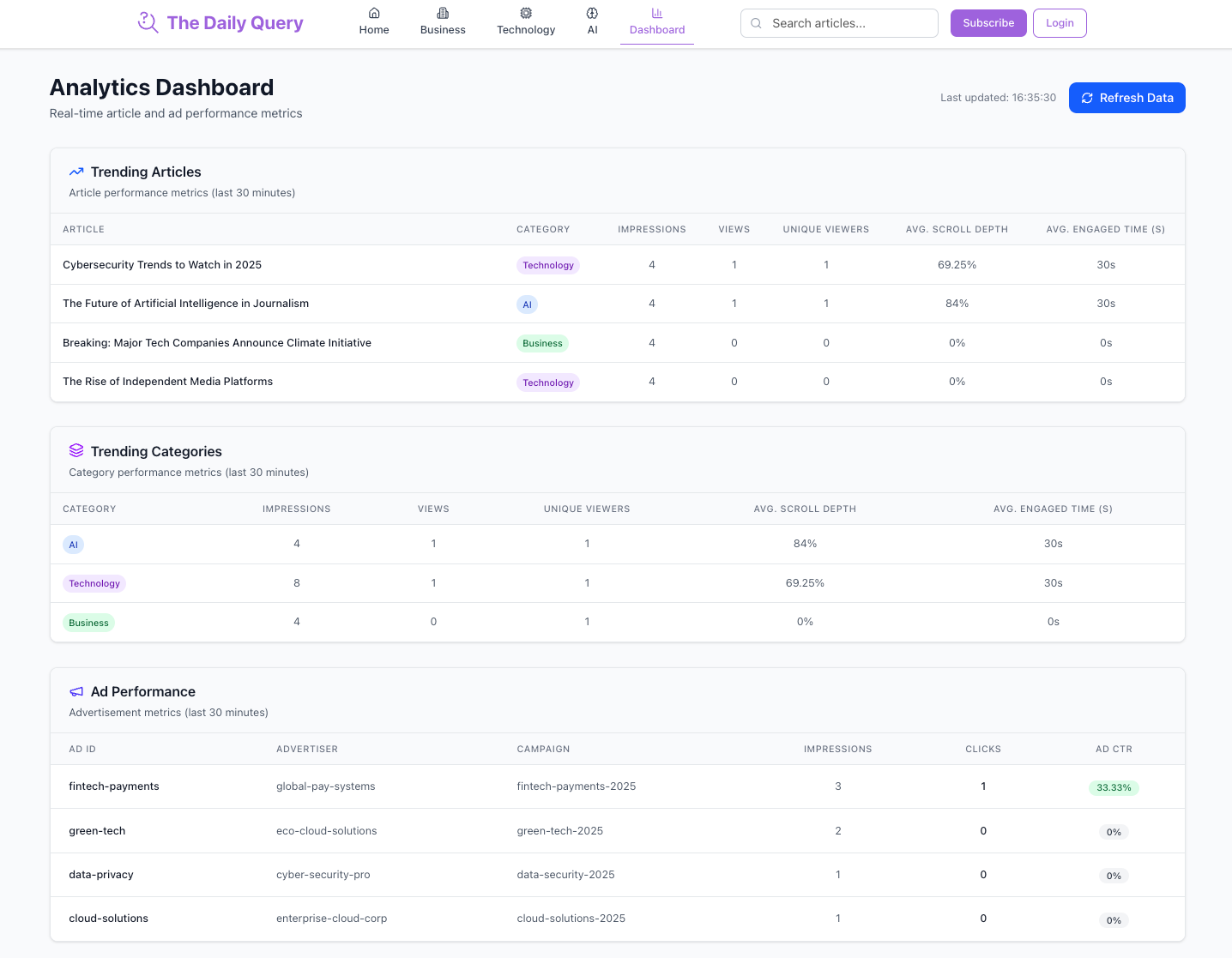

Real-Time Editorial Dashboard The dashboard lives at /dashboard within the Next.js app itself and queries ClickHouse directly for rolling 30-minute metrics across three reports:

- Trending Articles — Which articles are generating the most engagement right now

- Trending Categories — Which content themes are gaining momentum across the site

- Ad Performance — Impression and click-through metrics by ad placement

The queries powering these reports are in the dashboard API route, readable, extensible, and a useful reference for building your own.

Why this architecture works for real-time use cases

A few architectural decisions in this accelerator are worth calling out as they reflect trade-offs that matter for editorial analytics specifically.

Direct Snowbridge-to-ClickHouse ingestion (no message broker). For use cases where the primary consumer is a real-time dashboard, not a multi-consumer streaming topology, sending enriched events straight to ClickHouse via HTTP is a pragmatic choice. It cuts operational complexity without sacrificing the near-real-time latency that editorial teams need. If your use case later requires Kafka for fan-out to multiple consumers, Snowplow's composable infrastructure supports that too.

Schema-validated, typed events instead of generic page views. The article_interaction and ad_interaction schemas carry structured metadata — article title, category, interaction type, ad placement — that would otherwise require post-hoc parsing or enrichment in the warehouse. With Snowplow, this structure is enforced at collection time, which means the data landing in ClickHouse is already clean and query-ready.

Dashboard queries against live data. There's no materialized view layer or pre-aggregation step between ClickHouse and the dashboard. The API route runs SQL directly against the snowplow_article_interactions table with a 30-minute rolling window. ClickHouse is designed to serve large volumes of data with sub-second query times at a fraction of the cost of traditional cloud warehouses, and it scales comfortably to petabytes. For this use case, that means you can query raw event-level data directly without pre-aggregation or caching layers, and your metrics are always current. As your event volumes grow, the architecture doesn't change as ClickHouse keeps costs manageable as you scale.

Getting started: clone, configure, compose

You’ll need Docker, a ClickHouse Cloud account (30-day free trial available), and a few minutes:

- Clone the repo

- Run the table creation query (./clickhouse-queries/create-table-query.sql) in your ClickHouse SQL console

- Copy .env.example to .env and fill in your ClickHouse Cloud connection details

- docker-compose up -d

- Browse "The Daily Query" at localhost:3000 — read articles, click ads, scroll around

- Open Snowplow Micro to watch events streaming through the pipeline

- Query SELECT * FROM snowplow_article_interactions ORDER BY dvce_created_tstamp DESC in ClickHouse to see events landing

- Visit localhost:3000/dashboard and hit "Load Data" to see the real-time editorial dashboard populate

Where to take it next

The accelerator is designed as a starting point. Once you have the pipeline running and understand the data flowing through it, there are several high-value follow-ups that map directly to real customer use cases in media:

Personalized content recommendations. Use the real-time engagement data to dynamically update the Featured Article on the homepage based on the most-viewed content from the last 30 minutes. The data is already there — it's a query and a frontend change.

Content exclusion for returning readers. Filter out articles a user has already viewed so they see fresh content on every visit. Snowplow's domain_userid gives you the per-user context to make this work without authentication.

Real-time content scoring. Compute a live engagement score for each article — combining view velocity, read depth (via page pings), and ad interaction rates — and use it to programmatically rank content across the site.

Cross-platform tracking. Extend the implementation to mobile using Snowplow's iOS and Android SDKs, building toward a unified view of content engagement across platforms.

AI-powered editorial tools. The real-time behavioral data flowing through ClickHouse is a natural input for ML models and agentic applications — predictive audience churn, automated content recommendations, or AI copilots that help editors prioritize stories based on live engagement signals.

The same pattern, applied beyond media

While this accelerator is built for editorial analytics, the underlying architecture (Snowplow streaming pipeline → Snowbridge → ClickHouse Cloud → real-time dashboard) is a general-purpose pattern for any use case where you need sub-second visibility into user behavior. If you're already running ClickHouse (or evaluating it), the same building blocks apply across industries:

Ecommerce: Live merchandising and promotion analytics. Track product interactions, cart behavior, and promo code usage in real time. Surface which products are trending during a flash sale, detect when a checkout funnel is underperforming, or measure the real-time impact of a homepage merchandising change — the same rolling-window dashboard pattern this accelerator demonstrates, applied to SKUs instead of articles.

Gaming: In-session player behavior dashboards. Monitor live player engagement — level completions, in-app purchase triggers, session duration — and surface real-time dashboards for game ops teams. When you push a new event or seasonal content drop, you want to know within minutes whether players are engaging, not after an overnight batch job.

SaaS / Product: Real-time feature adoption and activation tracking. Instrument your product with Snowplow, stream events into ClickHouse, and build internal dashboards that show feature usage as it happens. Especially useful during launches — track activation funnels, identify drop-off points, and measure the impact of onboarding changes in real time rather than waiting for weekly product reviews.

Financial Services: Live transaction and interaction monitoring. Stream customer interaction events into ClickHouse to power real-time dashboards for fraud detection signal monitoring, customer journey visibility, or branch/digital channel performance comparisons. The schema-validated event structure Snowplow enforces is particularly valuable here, where data governance and auditability are non-negotiable.

Travel & Hospitality: Real-time search and booking analytics. Track search queries, listing views, booking funnel progression, and price sensitivity signals. Surface which destinations or properties are trending, detect abandonment patterns in real time, and feed booking velocity data into dynamic pricing models.

In each case, the core pattern is the same: define structured events with Snowplow schemas, stream them through a validated pipeline, land them in ClickHouse, and query them in a low-latency manner. This accelerator gives you a working reference implementation of that pattern — the domain-specific events and dashboard queries are the parts you swap out.

Built with ClickHouse

ClickHouse is purpose-built for the workloads this kind of real-time analytics demands. High-throughput ingestion handles traffic spikes during breaking news (or flash sales, or game launches). Sub-second aggregations power live dashboards without pre-computation overhead. And best-in-class compression keeps storage costs manageable even at petabyte scale.

Thank you to the ClickHouse team for their support and collaboration in building this accelerator.

"We're thrilled to partner with Snowplow on this accelerator. The combination of Snowplow's schema-validated event pipeline with ClickHouse's real-time data platform is a natural fit. It’s applicable not only for media publishers, but for any team that needs low-latency visibility into user behavior. We’re excited to see how brands can adapt this architecture pattern for their own real-time analytics needs.” – Abhinav Mehla, VP Global Partners & Alliances @ ClickHouse

Teams looking to take this to production can run against ClickHouse Cloud for a fully managed deployment with automatic scaling and separation of storage and compute.

What this means for data teams

Real-time editorial analytics changes how content teams operate. Instead of reviewing yesterday's numbers in a morning standup, editors can watch engagement unfold as readers interact with content. Trending stories surface within minutes. Ad placement decisions are backed by live data. And the foundation is extensible toward the AI-powered editorial tools that are rapidly becoming table stakes.

The accelerator is open source, the ClickHouse trial is free, and the whole thing runs locally in Docker. If you're a data engineer or developer at a media company — or any organization that needs real-time visibility into user behavior — give it a spin.

Interested in learning more about Snowplow or discussing this setup in further detail? Reach out to our team here and make sure to reference the resources below!

GitHub Repository — Complete codebase: Next.js app, Snowplow tracking, Snowbridge config, ClickHouse schema, and editorial dashboard.

Full Documentation — Step-by-step walkthrough, prerequisites to running the accelerator.

ClickHouse Cloud — 30-day free trial for a fully managed ClickHouse instance.

Snowplow Developer Hub — Tutorials, solution accelerators, video demos, and technical documentation.