AI Agent Memory: 6 Real-Time Behavioral Patterns Beyond Chat History

AI agents are getting smarter, but most of them still start every conversation the same way: cold, contextless, and generic. The user has to re-explain who they are, what they care about, and what they were doing last time. That's a missed opportunity.

For the most part, AI agent memory systems today focus on chat history. They remember what users said through previous interactions, declared preferences, and past conversations. That’s useful, but it’s incomplete. It’s basically a backward-looking record of a fraction of what actually happened.

There appears to be a category missing from the standard memory taxonomy: real-time behavioral memory. Instead of waiting for users to tell your agent what they want, what if your agent already knew, based on how users behave across your digital estate.

Snowplow Signals is a real-time behavioral intelligence layer that transforms raw first-party event data — clicks, views, searches, purchases, content interactions — into structured, real-time behavioral profiles. So instead of relying on what users say they want, Signals captures what they actually do — right now, not after a batch job, giving your AI agents a rich, accurate foundation to work from before the first message is even sent.

Think of it as the difference between an agent that only knows what you've told it versus one that knows what you've actually done. One waits for users to volunteer information. The other watches behavior.

In this post, we’ll walk you through six concrete architecture patterns for building behavior-based AI agent memory, from simple prompt enrichment to reactive real-time architectures.

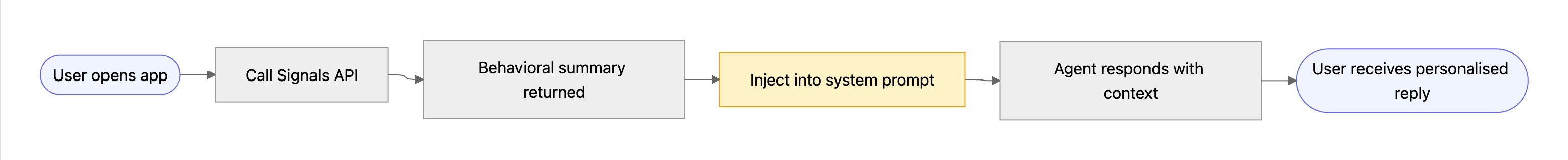

1. Prompt Injection — Real-Time Context at Inference Time

The simplest and most impactful integration. Before your agent handles the first user message, call the Signals API to retrieve a behavioral summary for the current user and inject it into the system prompt or first user message as context.

This gives the agent immediate short term memory about who it’s talking to — their interests, recent activity, user preferences, or purchase intent — without requiring the user to explain themselves. Unlike traditional agent memory that relies on chat history, this injects live behavioral context into the context window at inference time.

When to use it: Any agent where personalization matters from message one. Onboarding flows, support bots, shopping assistants, content recommendation agents.

import openai

import requests

def get_signals_summary(user_id: str) -> str:

response = requests.get(

f"https://api.snowplow.io/signals/v1/users/{user_id}/summary",

headers={"Authorization": f"Bearer {SIGNALS_API_KEY}"}

)

return response.json().get("behavioral_summary", "")

def run_agent(user_id: str, user_message: str):

behavioral_context = get_signals_summary(user_id)

system_prompt = f"""You are a helpful assistant.

Here is behavioral context about this user based on their recent activity:

{behavioral_context}

Use this context to personalise your responses where relevant."""

response = openai.chat.completions.create(

model="gpt-4o",

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": user_message}

]

)

return response.choices[0].message.content

2. Behavioral Interventions — Triggering Your Agent from What Users Do

Rather than waiting for the user to start a conversation, Signals can fire an intervention when it detects a meaningful behavioral change in real time — a user who normally browses daily has gone quiet, someone who added items to a cart hasn't checked out, or a user reaches an 'aha' moment on a purchasing journey.

Your agent subscribes to these behavioral triggers asynchronously and reaches out proactively: a Slack message, an in-app nudge, a personalized email draft, or opening a new chat session with pre-loaded context. This is behavior based memory management in action — the AI system doesn't just store data, it acts on behavioral patterns the moment they emerge.

When to use it: Re-engagement flows, churn prevention, proactive customer success outreach, cart abandonment recovery.

.jpg)

from fastapi import FastAPI, Request

import openai

app = FastAPI()

@app.post("/signals/webhook")

async def handle_intervention(request: Request):

payload = await request.json()

user_id = payload["user_id"]

trigger = payload["trigger_type"] # e.g. "churn_risk", "cart_abandoned"

behavioral_context = payload["context"] # enriched behavioral data from Signals

# Compose a personalised outreach message

prompt = f"""A user has triggered a '{trigger}' signal.

Behavioral context:

{behavioral_context}

Draft a short, friendly, personalised outreach message to re-engage them.

Be specific to their recent activity. Do not be pushy."""

response = openai.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": prompt}]

)

message = response.choices[0].message.content

# Send via your preferred channel (Slack, email, in-app, etc.)

send_message_to_user(user_id, message)

return {"status": "ok"}

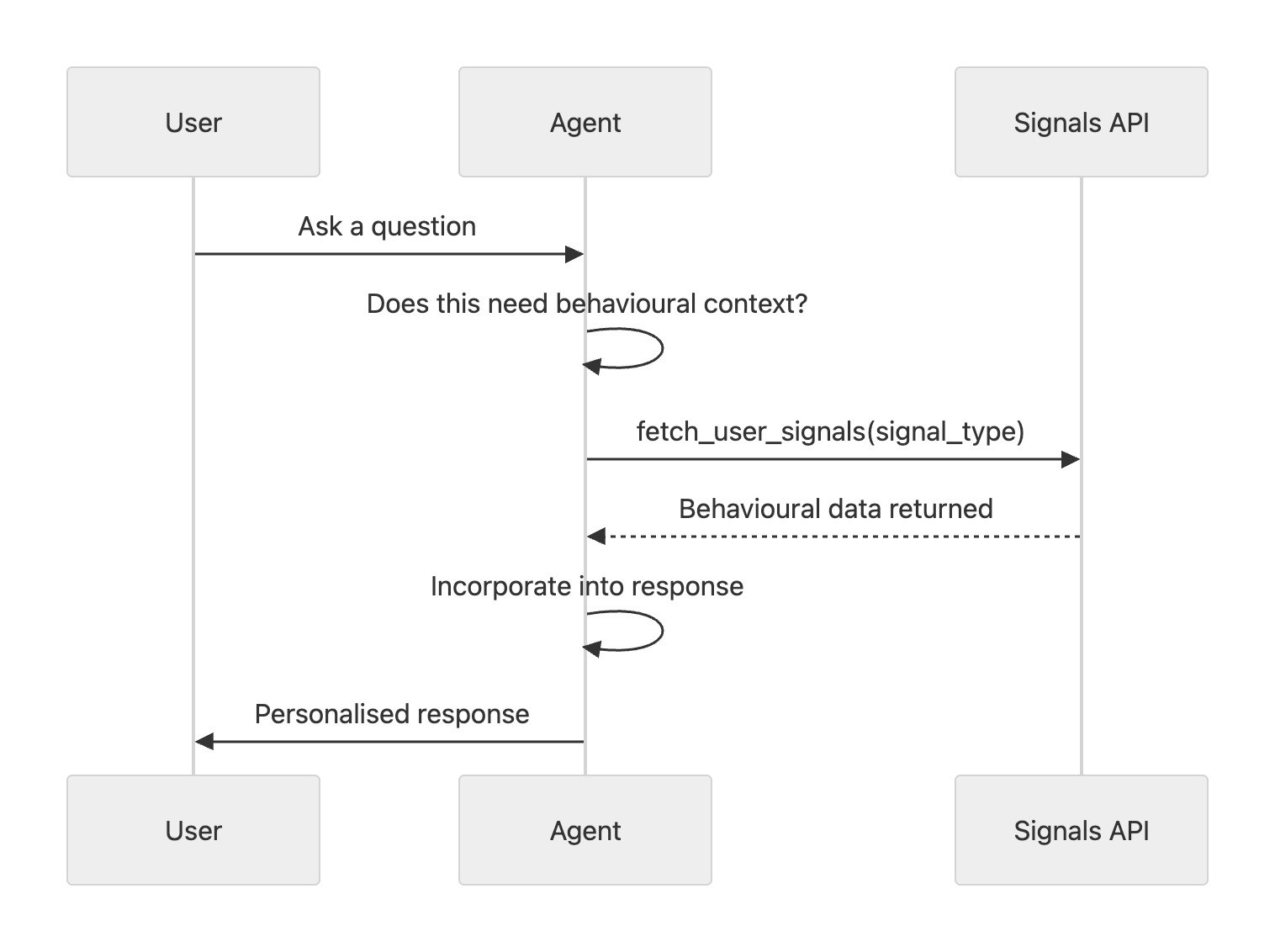

3. Tool Calls — On-Demand Behavioral Memory Retrieval for AI Agents

Instead of loading all behavioral context upfront, expose Signals as a tool the agent can call itself when it decides the information would be useful. The agent learns to reach for behavioral context when it encounters a question about preferences, history, or intent — and leaves it alone when it's not needed.

This is a more dynamic and token-efficient approach than prompt injection because it doesn't consume context window space until the agent actually needs it. It gives the agent more agency over how it uses behavioral data. Where memory storage frameworks like Mem0 or Zep manage conversational recall, this pattern gives your agent on-demand access to real-time observed behavior, which is a fundamentally different type of memory.

When to use it: General-purpose agents where behavioral context is sometimes relevant but not always; complex multi-turn conversations.

import openai

import requests

import json

def fetch_user_signals(user_id: str, signal_type: str) -> dict:

"""Fetch specific behavioral signals for a user from the Signals API."""

response = requests.get(

f"https://api.snowplow.io/signals/v1/users/{user_id}/signals",

params={"type": signal_type},

headers={"Authorization": f"Bearer {SIGNALS_API_KEY}"}

)

return response.json()

tools = [

{

"type": "function",

"function": {

"name": "fetch_user_signals",

"description": "Retrieve behavioral signals for the current user from Snowplow Signals. Use this when you need to understand the user's preferences, recent activity, or behavioral trends to give a more personalised response.",

"parameters": {

"type": "object",

"properties": {

"signal_type": {

"type": "string",

"enum": ["content_preferences", "purchase_intent", "engagement_trends", "product_usage"],

"description": "The category of behavioral signals to retrieve."

}

},

"required": ["signal_type"]

}

}

}

]

def run_agent_with_tools(user_id: str, user_message: str):

messages = [{"role": "user", "content": user_message}]

response = openai.chat.completions.create(

model="gpt-4o", messages=messages, tools=tools

)

# Handle tool call if the agent requests behavioral data

if response.choices[0].finish_reason == "tool_calls":

tool_call = response.choices[0].message.tool_calls[0]

args = json.loads(tool_call.function.arguments)

signals_data = fetch_user_signals(user_id, args["signal_type"])

messages.append(response.choices[0].message)

messages.append({

"role": "tool",

"tool_call_id": tool_call.id,

"content": json.dumps(signals_data)

})

# Final response with behavioral context

response = openai.chat.completions.create(

model="gpt-4o", messages=messages, tools=tools

)

return response.choices[0].message.content

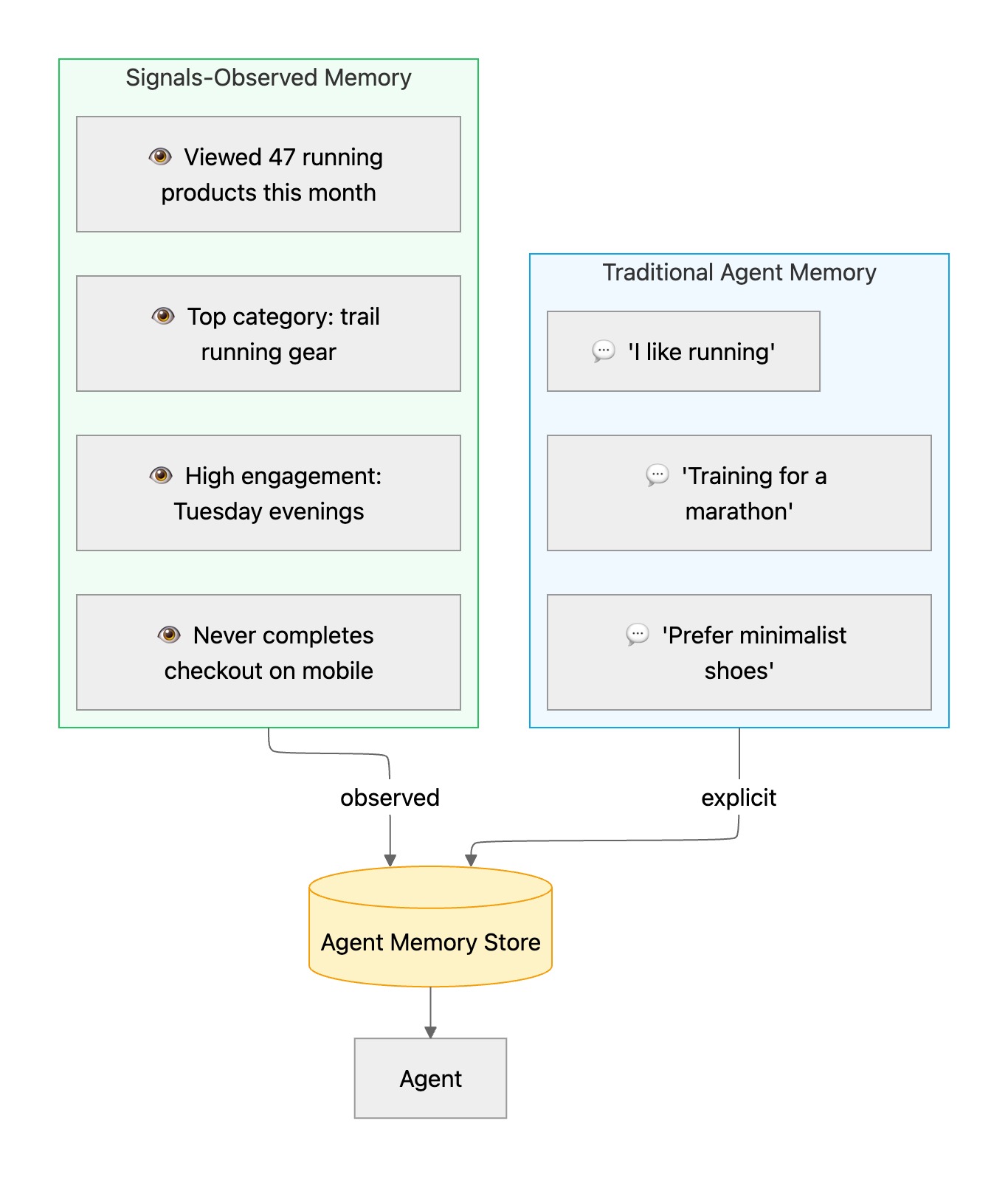

4. Long-Term Memory — Writing Observed Behavior to Your Agent's Memory Store

Traditional agent memory is built almost entirely from what the user has said — explicit statements made during previous interactions. "I like running." "I'm training for a marathon." "I prefer minimalist shoes." This is valuable, but it's incomplete: it captures declared preferences, not actual behavior, and it only reflects moments when the user chose to share something.

In the standard AI agent memory taxonomy, this is the difference between semantic memory (general facts and user preferences) and episodic memory (records of specific experiences). Most agent memory systems only build semantic memory from chat history. Signals lets you build both, from observed behavior.

Signals lets you close that gap. Alongside the explicit conversational record, you can now write observed behavioral trends directly into the agent's long term memory store — facts derived from what the user actually does across your product. The result is a much richer, more accurate model of the user that the agent can draw on across sessions.

At the end of a session (or on a schedule), write Signals-derived behavioral trends directly into the agent's long-term memory store. The next time the user interacts, the agent already knows their most-visited content categories, their preferred interaction style, their product tier — without needing to re-infer it from conversation history.

When to use it: Persistent agents used across multiple sessions; assistant-style agents that need to build up a model of the user over time. Note: you could achieve some of this by querying your data warehouse directly. The difference is Signals delivers pre-structured, real-time behavioral profiles, rather than raw event tables that require transformation before your agent can use them.

import requests

from mem0 import MemoryClient # or any memory framework: Zep, LangMem, etc.

memory_client = MemoryClient(api_key=MEM0_API_KEY)

def sync_signals_to_memory(user_id: str):

# Pull the latest behavioral trends from Signals

response = requests.get(

f"https://api.snowplow.io/signals/v1/users/{user_id}/trends",

headers={"Authorization": f"Bearer {SIGNALS_API_KEY}"}

)

trends = response.json().get("trends", [])

# Format trends as natural language facts and write to agent memory

memories = [

{"role": "system", "content": f"Behavioral insight: {trend['description']}"}

for trend in trends

]

# Example memory entries:

# "Most frequently viewed content category: running shoes"

# "Typical session time: evenings between 7–10pm"

# "Has never completed a checkout on mobile"

memory_client.add(memories, user_id=user_id)

print(f"Synced {len(memories)} behavioral memories for user {user_id}")

# Run on a schedule or after each session

sync_signals_to_memory(user_id="user_abc123")

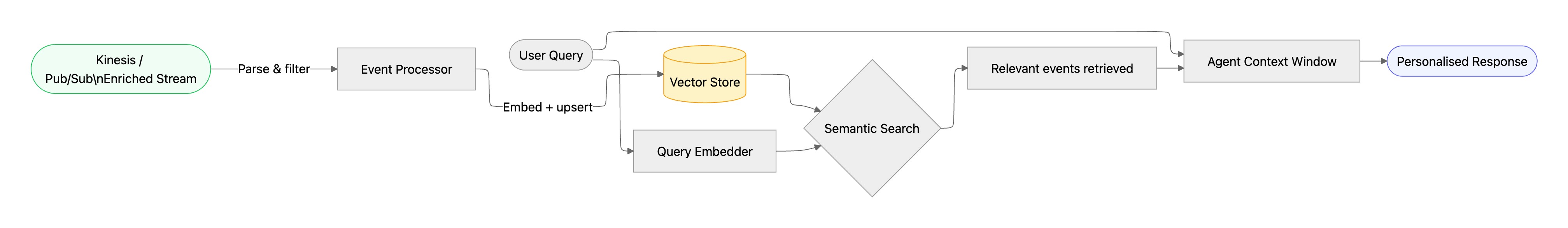

5. Behavioral RAG — Retrieval Augmented Generation for Event-Level Agent Memory

🔬 Prototype: We're actively building this as a first-class integration. Today it requires consuming the Snowplow Enriched stream directly and running your own indexing pipeline — the architecture below shows exactly how. If this pattern is interesting to you, we'd love to hear from you.

The previous approaches either load context statically (prompt injection, memory) or on demand (tool call). Behavioral RAG sits between them. Rather than summarizing user behavior into a fixed blob, you embed and index enriched behavioral events into a vector database. At query time, the agent retrieves the most semantically relevant events for the current conversation using retrieval augmented generation.

The result is a highly targeted, query-aware slice of behavioral context. If the user asks "what running shoes would you recommend?", the agent retrieves events related to footwear browsing and running content — not the user's checkout history or homepage visits. This is how memory works when you need precision over breadth — think of it as episodic memory with semantic search.

The source of truth here is the Snowplow Enriched event stream — available via Kinesis (AWS) or Pub/Sub (GCP) depending on your pipeline. Each enriched event is a TSV record containing the full canonical Snowplow event schema, including user identifiers, event type, page context, and any custom entities.

When to use it: High-volume users with rich behavioral histories; recommendation agents; search assistants where precision matters.

Here’s an example for Pub/Sub (GCP), Kinesis (AWS) would follow a similar process:

from google.cloud import pubsub_v1

def consume_and_index_pubsub(subscription_path: str, target_user_id: str):

subscriber = pubsub_v1.SubscriberClient()

collection = chroma.get_or_create_collection(f"user_{target_user_id}_events")

def callback(message):

event = parse_enriched_event(message.data.decode("utf-8"))

if event.get("domain_userid") != target_user_id:

message.ack()

return

description = format_event_description(event)

embedding = client.embeddings.create(

input=description, model="text-embedding-3-small"

).data[0].embedding

collection.upsert(

ids=[event["event_id"]],

embeddings=[embedding],

documents=[description],

metadatas=[{"timestamp": event.get("derived_tstamp", ""), "event_name": event.get("event_name", "")}]

)

message.ack()

streaming_pull_future = subscriber.subscribe(subscription_path, callback=callback)

streaming_pull_future.result() # blocks; run in a background thread in production

def retrieve_relevant_context(user_id: str, query: str, top_k: int = 5) -> list[str]:

collection = chroma.get_collection(f"user_{user_id}_events")

query_embedding = client.embeddings.create(

input=query, model="text-embedding-3-small"

).data[0].embedding

results = collection.query(query_embeddings=[query_embedding], n_results=top_k)

return results["documents"][0]

def run_agent_with_behavioral_rag(user_id: str, user_message: str):

relevant_events = retrieve_relevant_context(user_id, user_message)

context_block = "\n".join(f"- {e}" for e in relevant_events)

system_prompt = f"""You are a helpful assistant.

The following behavioral events are relevant to the user's current query:

{context_block}

Use these to inform your response where appropriate."""

response = client.chat.completions.create(

model="gpt-4o",

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": user_message}

]

)

return response.choices[0].message.content

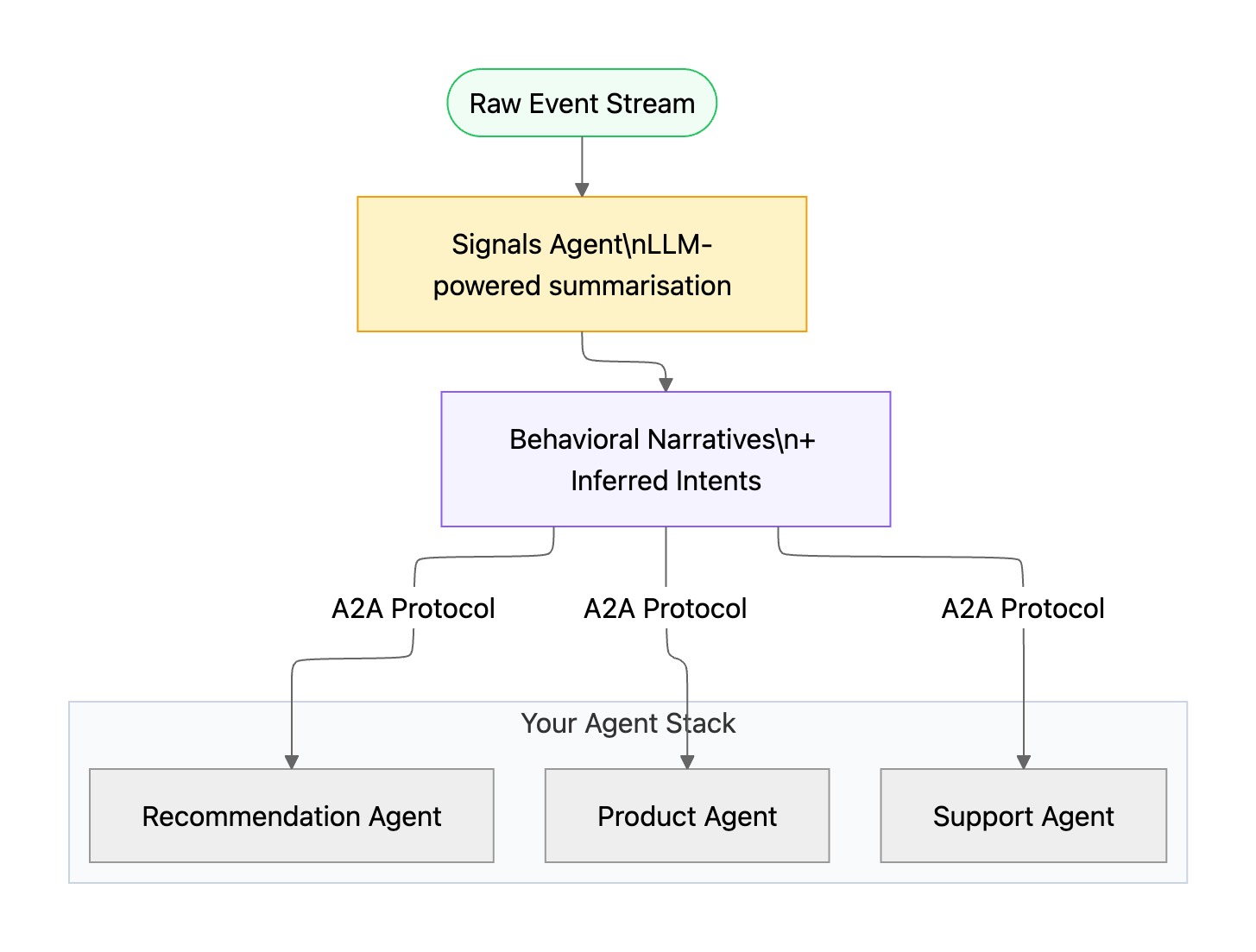

Signals Agents + A2A — The Zero-Integration Behavioral Memory Layer

🚀 Coming in the next few months, sign up to be notified of availability

All of the previous patterns share a common assumption: your application code is responsible for calling Signals, shaping the data, and deciding how to inject it into your agent. That works well, but it puts the integration burden on you.

We're building something that flips the model. Signals Agents is a new capability that makes Signals itself an agent — one that uses LLMs to continuously read and summarize the raw behavioral event stream, generating natural language session narratives and predicted user interests in real time.

Instead of your agent receiving a list of statistics or a data payload, it receives something like:

"This user appears to be preparing for a spring marathon. Over the past two weeks they have systematically compared racing shoes across five brands, with heavy engagement on lightweight and carbon-plate options. Purchase intent is high — they've visited the checkout page twice without converting."

That's not a database query or a memory lookup. That's a real-time behavioral narrative — richer than any memory type built from chat history alone.

The integration model here aligns with the emerging Agent-to-Agent (A2A) protocol. Rather than every team writing custom agent memory code, any A2A-compatible agent in your stack can query Signals Agents directly — a standard interface for real-time behavioral intelligence that works across your entire agent ecosystem. For teams building agents today, this eliminates the memory management overhead entirely.

We'll share more on Signals Agents soon. If you're building agents today and want to be an early design partner, reach out.

How to Choose the Right AI Agent Memory Pattern

These patterns aren't mutually exclusive — in fact, the most capable agents combine several of them:

- Prompt injection for real-time short-term memory at inference time

- Tool calls for dynamic, on-demand behavioral memory retrieval mid-conversation

- Memory writes so long-term memory compounds with observed behavior across sessions

- Interventions so the agent can act on behavioral signals without waiting for a user message

- Behavioral RAG for retrieval augmented generation over event-level episodic memory

- Signals Agents (coming soon) for a zero-integration-code behavioral memory layer via A2A

Comparison Table:

Signals gives your agent a real-time, first-party behavioral foundation that no LLM can replicate on its own — and no amount of chat history can match. The right pattern depends on your agent's architecture, your users' behavioral richness, and how much personalisation matters in your context.

If you're building on Snowplow and want to explore what's possible with Signals, get in touch or dive into the Signals documentation.